Authored by Yaffa Shir-Raz via Brownstone Institute,

“I need to ask someone else to take responsibility for the second part of the approvals process, so that I won’t have a conflict of interest. I’m also working with Bill Gates and the World Health Organization on the vaccine itself.”

This admission of a conflict of interest was made by Prof. Lester Schulman, secretary of the Ministry of Health’s polio committee, in March 2023, during an internal discussion about approving the importation into Israel of a new polio vaccine. The vaccine was developed and promoted by the World Health Organization in collaboration with the Bill & Melinda Gates Foundation, and its approval pathway relied on a new emergency authorization mechanism the WHO has developed in recent years: the EUL (Emergency Use Listing).

Although the remark was framed as a technical aside, it was an unusual confession of a conflict of interest by the committee’s secretary. Its seriousness is compounded by the fact that it was made only after the committee had already voted by an overwhelming majority to initiate the process of bringing the vaccine to Israel, and after it had already worked vigorously to persuade the Pharmaceutical Division to cooperate.

The quotation does not appear in the official minutes of the meeting that were provided to us. It is heard on an audio recording of the session, one of several recordings passed on to us by a whistleblower. The minutes were provided only following a Freedom of Information request and subsequent litigation.

The episode is serious in its own right. But it goes far beyond a local episode of personal conflict of interest or an administrative failure within Israel’s health system. The materials point to something more consequential: the use of an international emergency authorization pathway to shape regulatory decisions inside a sovereign state, advanced through overlapping professional networks, without the organization assuming the legal responsibilities borne by national regulators.

In the United States, recent political debates over withdrawal from the World Health Organization were widely framed as a clash between scientific consensus and institutional criticism. Yet the Israeli case, and the materials in our possession, point to a much larger picture.

This was the first implementation of the EUL mechanism within a country with a functioning Western regulatory system. Israel served here as a regulatory test case: an attempt to determine whether it is possible, in practice, to shape an approval pathway inside a sovereign state without holding formal regulatory authority and without being subject to the judicial and parliamentary oversight that applies to a national regulator. In doing so, it exposes how the organization has been operating in recent years: no longer merely an advisory and coordinating body, but an institution that creates operating frameworks that, in practice, shape approval processes inside sovereign states.

The EUL: An Emergency Mechanism or a De Facto Regulatory Infrastructure?

The World Health Organization was established in 1948 as an intergovernmental body tasked with providing professional assistance and technical guidance, promoting research, collecting knowledge, and developing recommendations for its member states. Article 22 of the WHO Constitution leaves states the right to opt out of its regulations, a clear indication that the organization was not granted regulatory powers such as authorizing drugs and vaccines or supervising their manufacture. These areas remained the exclusive responsibility of states themselves, which also bear legal and public responsibility for the decisions of their national health authorities.

In recent years, the WHO has developed mechanisms that expand its influence beyond recommendations and, in effect, enable it to directly influence regulatory authorization processes within states. The central mechanism is the EUL, an independent WHO emergency procedure that is not part of national authorization systems.

According to the organization’s documents, the EUL is defined as a temporary, risk-based authorization for the use of unapproved medical products in emergency situations where no approved product is available, and on the basis of partial data on quality, safety, and efficacy. These documents emphasize that the EUL is not licensure, and that it does not replace national regulatory authorization.

But what is defined as a temporary bridge measure that does not replace national regulation becomes, in practice, an operating framework. Once EUL is activated, it maps out the timetable, the milestones, and the starting point of the discussion. This restructuring of the decision-making process also generates pressures that extend beyond the initial authorization stage. As Dr. David Bell, a former WHO medical officer, notes: “Once a product has been granted emergency authorization and widely deployed, there is strong institutional pressure to overlook its limitations and proceed toward full approval, as reversing course may carry significant professional and reputational risks.”

Instead of a regulator initiating an independent process based on its own data and judgment, it operates within a workflow whose structure has already been defined in the international arena.

The institutionalization of the EUL reflects a broader shift in regulatory practice. During Covid-19, emergency authorization became the operative pathway for deploying novel vaccines at population scale within Western regulatory systems. That experience established the practical legitimacy of approving and distributing vaccines on the basis of interim data under declared emergency conditions. A regulatory model tested within sovereign systems had become normalized.

The EUL translates this logic to the international level. It creates a structured emergency pathway through which products can advance prior to conventional Western licensure. Once activated, the pathway structures expectations, timelines, and decision points for states considering adoption.

Under the International Health Regulations (2005), a Public Health Emergency of International Concern is defined primarily in relation to international spread and coordinated response, without a quantified severity threshold. During the 2009 H1N1 pandemic, controversy arose over the WHO’s pandemic phase definitions, which emphasized geographic spread rather than clinical severity. Where emergency criteria are flexible, the declaration carries procedural consequences: it opens access to accelerated authorization mechanisms. Over time, this flexibility has lowered the practical threshold for invoking emergency-based authorization mechanisms.

Rather than independently constructing a full evidentiary assessment from first principles, states deliberate within a predefined emergency framework. The activation of the pathway reorders the sequence of decision-making. Questions of timing, alignment, and external validation take precedence over the threshold question of whether the evidentiary basis would independently justify authorization under ordinary regulatory standards.

nOPV2: The First Implementation of the Mechanism

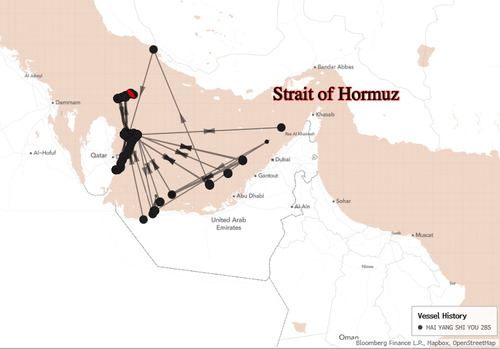

The nOPV2 polio vaccine discussed in Israel was the first product to receive EUL status from the WHO. The listing was granted on November 13, 2020, making the vaccine the first implementation of the new procedure. Beginning in March 2021, it was deployed in Nigeria and later in additional countries in Africa and Asia.

The vaccine is manufactured in Indonesia by a company called Bio Farma. Its development and clinical studies were funded by the Bill & Melinda Gates Foundation, which also committed $1.2 billion to “support efforts” to advance it, as part of the Polio Eradication Strategy 2022–2026.

On December 21, 2023, the vaccine also received WHO Prequalification (PQ) status. This procedure is not national licensure and is not equivalent to approval by a stringent Western regulator. It is a WHO assessment mechanism that enables UN agencies and countries to rely on it for procurement and use through international health mechanisms. Although PQ is not part of EUL, in practice it signals a shift from a temporary emergency framework to a broader and continuing distribution pathway that no longer depends on the declaration of a specific emergency.

The trajectory of nOPV2 illustrates more than the introduction of a new vaccine. It demonstrates the operationalization of an emergency-based authorization model beyond a single national regulator. A product listed under an international emergency mechanism progressed from provisional deployment to broader institutional endorsement, without passing through the conventional sequence of Western licensure. It is this pathway that was subsequently introduced into Israel’s regulatory deliberations.

How the International Pathway Was Embedded Inside the Ministry of Health

The discussions in the ERT committee make it possible to examine how the EUL pathway was integrated in practice into decision-making inside Israel’s Ministry of Health.

The ERT (Emergency Response Team) committee at Israel’s Ministry of Health was established in March 2022 as an advisory committee to manage the response to a polio outbreak detected in sewage testing in Israel. The committee’s mandate included receiving ongoing updates, formulating operational recommendations, adapting vaccination policy, and managing public information efforts. The committee is chaired by Prof. Manfred Green, head of the International Public Health Leadership Program at the University of Haifa’s School of Public Health, and its secretary is Prof. Lester Schulman, an epidemiologist who headed the Central Environmental Virology Laboratory at Sheba Medical Center (Tel Hashomer).

In its early deliberations, the committee dealt with poliovirus type 3, which, according to its documents, originated from the live-attenuated vaccine. Even in these discussions, there is already a clear sensitivity to the WHO’s position. The committee chair explicitly states that if Israel does not launch a vaccination campaign, it may be perceived by the WHO as a “rogue” state. This perception does not need to be imposed externally. It emerges within a shared professional environment in which deviation is experienced not merely as a policy disagreement, but as a departure from the norms of the group. These dynamics are consistent with observations from within international health institutions.

As Dr. David Bell, a former WHO medical officer, notes: “Delegates in international health forums are often not acting primarily as national representatives. They are part of a large professional network, trained in similar institutions, meeting regularly, and sharing a common worldview. These networks are supported by major private funders and institutional partners, which further reinforces alignment across countries.

Within these networks, dissenting positions are often perceived as unscientific or backward, creating strong pressure to align. Countries may be reluctant to deviate for fear of appearing outside the accepted consensus.”

Bell further characterizes this process as a form of soft power operating through institutional culture rather than formal authority: “This is how soft power operates: shared incentives, professional culture, and support from major funding bodies allow preferred approaches to spread across systems, often without the need for formal coercion.”

Accordingly, the team recommended a “Two Drops” campaign using the existing live-attenuated vaccine (OPV3). The campaign began in April 2022 and was halted two months later. Although uptake among the primary target population was minimal, the Ministry presented the campaign as a success and announced the elimination of the strain from sewage surveillance.

Shortly thereafter, the Ministry of Health announced that immediately upon eliminating type 3, type 2 was detected in sewage, which also derives from a live-attenuated vaccine. Although to date no paralysis cases from this strain have been found in Israel, the ERT committee began, already in mid-2022, to consider the option of using the new nOPV2 vaccine. At first it arose as a general reference, but it soon became the central axis of the discussion.

At this stage, the discussion already linked epidemiological assessment to procedural consequences. Even in the absence of clinical cases, escalation was considered in relation to the regulatory options it would make available.

From late summer 2022, the nOPV2 approval pathway was presented to committee members in several meetings, using presentations and background materials provided by the WHO. The minutes we received indicate that the discussion was based on WHO presentations and background materials. The minutes contain no record of a full manufacturer dossier, independent regulatory data, or an opinion from any Western regulatory authority.

On December 1, 2022 (Minutes ERT 21), the ERT committee voted by an overwhelming majority to initiate the process of bringing the new vaccine to Israel. According to the minutes, 14 of 15 committee members voted in favor of the recommendation, as did all six Ministry of Health representatives who participated in the vote. At that point, the principled decision had been made. The discussion shifted from whether to adopt the pathway to how to implement it.

Immediately following the vote, the regulatory question shifted to implementation and to the procedural steps required to activate the pathway. In a committee discussion held on February 28, 2023, Dr. Sharon Alroy-Preis suggested that a formal emergency declaration might be necessary in order to enable the relevant authorization track, remarking that “perhaps if there are two clinical cases we will be able to persuade the minister to declare an emergency.” The exchange indicates that the declaration of emergency was discussed in direct connection with the procedural track it would enable.

Conflicts of Interest on the Committee: Advisers to the WHO Leading the Recommendation to Bring the Vaccine to Israel

During the months in which the committee’s secretary, Prof. Schulman, presented the pathway for bringing nOPV2 to Israel, the committee members were not presented with information about his conflicts of interest with the WHO and the Bill & Melinda Gates Foundation. In practice, during that period, Schulman served as a technical consultant on the vaccine through McKing Consulting Corporation, a professional contractor working on projects of the WHO and the Global Polio Eradication Initiative (GPEI), which is centrally supported by the Gates Foundation.

He also received a support grant from the WHO, likewise for consulting related to nOPV2. Moreover, he received travel funding from the Bill & Melinda Gates Foundation to participate in dedicated nOPV2 working meetings in London in February 2023; that is, precisely during the period relevant to the ERT committee’s deliberations on the vaccine. Added to this is co-authorship on an international scientific publication from June 2023 that was conducted with the support of the WHO, GPEI, and the manufacturer Bio Farma.

In other words, this was not a general affiliation or a distant professional past. It was a direct, built-in conflict of interest related to the specific vaccine under discussion and to its unusual approval pathway under EUL. Schulman declared these ties in official scientific publications, but they were not brought to the committee’s attention in real time, even as he was the one presenting the approval pathway and leading the professional discussion. When the Ministry of Health was asked to address the issue, both in the Freedom of Information process and in a formal request to the spokesperson, it responded categorically that no conflicts of interest existed in the committee. This response contradicts Schulman’s own public declarations.

Only about three months after the vote, during a further discussion held on February 28, 2023, Schulman asked that “someone replace me” in the continuation of the approvals process, so that he “won’t have a conflict of interest,” as though it were a minor technical matter rather than a substantive failure, and without addressing the fact that the principled decision had already been made on the basis of materials and a regulatory framework that he himself had helped advance internationally. Moreover, after this admission, Schulman’s conflicts of interest were not documented in the meeting minutes that were provided to us following the FOI litigation.

Schulman was not the only senior committee member with a conflict of interest involving the WHO. The committee chair, Prof. Manfred Green, recently acknowledged in a Knesset Health Committee discussion that his partner, Prof. Dorit Nitzan-Kluski, also serves on the polio committee. Indeed, Prof. Nitzan-Kaluski is listed as a member already in the original appointment letter, in March 2022. Yet only a month before that appointment, in February 2022, she formally completed her senior role as the WHO Regional Emergencies Director for Europe. Moreover, a few weeks later, with the outbreak of the war between Russia and Ukraine, she returned to intensive professional activity on behalf of the WHO as an Incident Manager in Ukraine, a role she performed in parallel with her membership in the committee.

This becomes all the more problematic when the committee chair presents the WHO’s position to members as an “ultimatum” that must be adopted, without full disclosure that his partner, serving alongside him, is a senior operational figure within that same organization.

This is not merely a matter of personal ethics. The committee secretary, who drafted, promoted, and led the presentation of the vaccine and the EUL pathway to Israel, was simultaneously active internationally in advancing them, while the committee chair adopted the same framework. The result was that Israel’s decision-making unfolded within the same professional network that promoted the vaccine and its approval pathway in the international arena.

Under such circumstances, it is difficult to speak of independent national regulatory judgment when the same actors are involved in promoting the pathway both globally and within the Israeli deliberations.

“Who Will Blink First”

In stark contrast to the confidence with which the vaccine’s safety and manufacturing integrity were presented in the ERT committee, the minutes show that the Pharmaceutical Division, Israel’s competent regulatory authority for authorizing medicines and vaccines, expressed reservations, and even opposition, at an early stage.

This opposition was mentioned several times during the discussions, including the reasons raised by division staff: the absence of licensure by any Western country, the fact that the vaccine is manufactured in Indonesia, a country to which Israel’s Ministry of Health has no direct regulatory access, meaning it cannot independently examine manufacturing conditions at the plant, and reliance on an emergency mechanism that had not yet been completed.

In one discussion, Dr. Sharon Alroy-Preis describes the Pharmaceutical Division’s position unambiguously: “Our pharmacy division refuses at this stage to take a vaccine or to approve a vaccine coming from Indonesia with no Western regulatory process at all. That’s a very, very big obstacle…Right now our pharmacy division is saying: ‘We will not approve such a thing. It doesn’t look to us like it meets any standards that we can approve.’ “

Yet these reservations were not presented as a regulatory red line, but as a problem to be “solved” in order to continue advancing within the EUL pathway. The regulator’s opposition did not stop the process. It was framed as an operational obstacle.

This produced a reversal of roles: an advisory committee effectively shaped the regulatory pathway, while the body legally authorized to approve or reject vaccines was expected to adapt to a framework that had already been set, and at times to justify its own resistance.

Against this backdrop, the discussion focused on finding an external regulator that would provide legitimacy, first and foremost the UK regulator. The minutes repeatedly refer to Britain as the country that might approve the vaccine before Israel. For example, in one discussion (ERT 17), Prof. Ian Miskin, one of the committee members, states explicitly that “we probably shouldn’t be the first in the West to use nOPV2, ” and that while the United States likely would not use the vaccine, “the UK might.” In the February 28, 2023 discussion, Dr. Sharon Alroy-Preis framed the situation even more explicitly: “Maybe we’ll challenge them – every country that hears ‘Indonesia’ doesn’t want to be the first…so everyone is waiting to see who will blink first.”

This dynamic captures the position local decision-makers found themselves in within this mechanism. Although they did not have the basic data necessary for a proper regulatory authorization of a vaccine, they did not challenge the pathway itself. Instead, they searched for a Western country to provide the first stamp of approval. The international framework had already been accepted as the starting point. The remaining question was only which country would supply the legitimacy that would allow others to follow. In such a setting, the scope of criticism narrows. The focal point is no longer vaccine safety or manufacturing quality, but joining a pathway already defined, and the fear is not scientific error but deviating from the line.

This dynamic cannot be explained solely by institutional caution. Once the EUL framework was accepted as the operative reference point, deliberation shifted from independent evidentiary assessment to questions of timing and alignment. The regulatory threshold itself was no longer the central issue. What mattered was whether, and by whom, the pathway would first be validated in the West. The architecture of decision-making had already been set.

“We Got Nothing, Nothing, Nothing Except WHO Presentations”

About two months after the ERT committee had already voted and made a principled decision to advance the introduction of nOPV2 to Israel under the EUL mechanism, and after months of discussions in which the gap between the pathway mapped out in the committee and the regulator’s position only widened, Dr. Alroy-Preis asked to invite Dr. Ofra Axelrod, head of the Pharmaceutical Division, to explain her opposition.

In the subsequent discussion, when Dr. Axelrod did come to the committee and systematically presented the data and information available to the Pharmaceutical Division, it became clear that the gap was far larger than could be inferred from earlier discussions. What she laid out showed that this was not merely a discrete regulatory disagreement or a “difficulty” that could be bridged, but an absence of the basic regulatory data required to evaluate vaccine safety, manufacturing integrity, and the regulatory pathway itself.

At the outset, Axelrod clarified what constituted the division’s evidentiary base: “We, of course, got nothing, nothing, nothing except WHO presentations. On the basis of that, to approve something, that won’t pass.” In effect, she revealed that these presentations were the only materials presented to the committee and had served as the basis for the vote to begin the approval process for importing the vaccine into Israel.

Contrary to the impression created earlier, in which the vaccine was presented as being on an advanced pathway, Axelrod described a vaccine that was “still in a clinical trial…a vaccine at a very, very early stage…it doesn’t even have prequalification, which is really the most basic approval there is.” She also addressed the vaccine’s status under EUL and noted: “There was an initial recommendation in 2020, and since then no final decision has been made…”

Even the hope that a Western country would soon authorize the vaccine proved, according to her, unfounded. “Right now, because of the gaps and lack of information, the British do not intend to approve use of this vaccine in the UK. Even if it becomes truly essential, and maybe even a temporary approval, it’s very challenging. After that conversation we asked the British for materials. There was nothing; they did not pass anything on. In early February we approached the British again, and the answer was very evasive. The answer was: ‘We’ll try to create a direct connection for you with the company.’ Since then we haven’t heard anything, not from the British and not from the company. “

As for manufacturing itself, Axelrod described a plant not recognized by Western regulatory authorities, and a picture of inadequate regulatory oversight. “The plant is not recognized, it manufactures vaccines for the developing world, for WHO countries…the manufacturing company avoided direct contact with the MHRA, the UK regulator. They did not give them a dossier or any information they received directly from the company…eventually, the British managed to get the company’s agreement to conduct a GMP inspection. The British visited the company and found gaps. They did not specify what they were. And the company has not undergone any GMP inspection by an authority we recognize…”

Beyond the regulatory gaps, Axelrod’s comments also illuminate the lack of transparency in the committee’s work. She told the committee that a Freedom of Information request had already been filed with the Ministry concerning the vaccine discussions. “I have to share with you that we already received an FOI request about this vaccine. We haven’t approved anything yet, and already people are asking us: why and how and who and what.” The committee’s minutes were not published to the public in real time. They were provided only following an FOI request and prolonged litigation. The fact that the information was exposed only in this way makes clear that the discussion was not accompanied by proactive transparency from the Ministry.

A Test Case for a New Model

As serious as the Israeli case is, both in the conflicts of interest it exposes and in the attempt to promote authorization without basic regulatory data before the national regulator, the larger significance lies elsewhere. Israel was the first Western arena in which the EUL mechanism was put into practice. This is not merely a local event. It serves as a test case for a new model – a practical examination of the WHO’s ability to shape approval processes in a Western country without bearing direct regulatory responsibility.

Beyond the damage to sovereignty, the danger in this model is deeper. The WHO does not bear legal responsibility within states and is not subject to judicial or parliamentary oversight there. In a national regulatory system, a decision to authorize a vaccine is subject to a clear administrative law framework: documents can be demanded under freedom of information statutes, petitions can be filed in court, reasoning can be compelled, and decisions can be reviewed for reasonableness.

States that accept the EUL mechanism retain full legal and political responsibility for the decision, while key elements of its framework are shaped outside their systems. The national regulator will be required to defend in court a decision whose framework it did not set; the government will bear the public cost; and citizens will discover that the body that shaped the pathway is not subject to their courts and owes them no legal accountability.

A further concern is the lack of transparency and the state’s inability to independently assess the data presented to it. In recent years, the research literature has pointed to transparency gaps in the WHO’s decision-making mechanisms, especially in emergencies. Studies published, among others, in BMJ Global Health (2020), the Journal of Epidemiology and Global Health (2025), and Public Health Ethics described partial publication of minutes, difficulty reconstructing decision rationales, and the scope of influence not matched by parallel oversight mechanisms.

The Israeli case shows how such a gap translates at the national level: discussions not proactively published, near-exclusive reliance on materials originating from the organization itself, and progress along a regulatory pathway before the full data required for independent review had been provided.

In this case, the move was halted in Israel, but only after a principled decision had already been made and the pathway had already been mapped out, and only thanks to regulatory insistence on demanding data and holding to threshold standards, and civic insistence on exposing information that was not published.

Against this background, decisions by countries such as the United States to distance themselves from the World Health Organization can be understood in the context of broader debates over regulatory authority and accountability in global health governance. The Israeli case raises a more general question: to what extent can regulatory independence be maintained when key elements of the decision-making framework are shaped through external processes that precede national review?

The case exposes a widening gap between formal national authority and the external frameworks that increasingly determine regulatory outcomes in advance.

The Ministry of Health was asked to respond to these findings but chose not to comment.